The big hubbub about town recently is that Facebook manipulated the news streams of 689,000 users in the name of a psychological experiment.

The results, published in the Proceedings of the National Academy of Science (PNAS), sought to control the types of content you might see to determine whether or not it had any influence on how you felt.

They intentionally would show you only negative stories to see if you then updated your status to something sad or depressing. On the flip side, they also showed only positive stories to see if you would then update something happy.

The results state:

When positive expressions were reduced, people produced fewer positive posts and more negative posts; when negative expressions were reduced, the opposite pattern occurred. These results indicate that emotions expressed by others on Facebook influence our own emotions, constituting experimental evidence for massive-scale contagion via social networks.

It’s an interesting study, to be sure. One that has created lots of commentary on the ethics of it all.

Some have said it’s plain old A/B testing while others have talked about the ethics (or lack thereof) of it all.

I’ll leave that debate to those who understand research and the ethics that industry requires of studies such as these.

What I’d like to focus on, instead, is the communications piece of it.

The Non-Apology

I write often about the social media mob and how companies bend to the outrage of the vocal minority.

Susan G. Komen did it. The Gap did it. In fact, Melissa Agnes and I have a podcast coming up (in August) where we talk about this phenomenon in crisis communication and how important it is to stand your ground, if what you’ve done is on strategy.

What Facebook did, like it or not, was on strategy with their quest to better understand how their users respond to the content on their site.

That is why you haven’t seen a formal apology (except for a typical corporate non-apology from Sheryl Sandberg while in New Delhi) from the company.

One of the authors of the research, Adam Kramer, posted the reason for the research on his personal page.

He did say in the fourth paragraph of a five paragraph explanation:

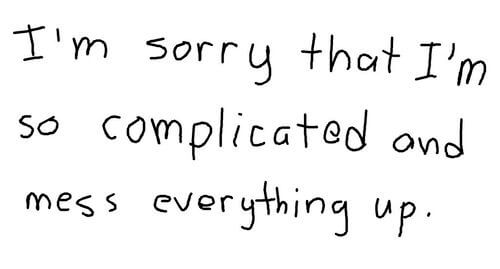

My coauthors and I are very sorry for the way the paper described the research and any anxiety it caused.

But they’re not sorry and it’s likely they will do this again.

Facebook Doesn’t Owe Us Anything

Facebook consistently tweaks its algorithm to bring users more “relevant news and what their friends have to say about it.”

As part of that tweaking, they have the ability to manipulate the news we see, the comments we can respond to, and the updates that appear.

They have a strategy. They have a plan that works toward that strategy. They are working that plan.

This represents only a tiny piece in a larger privacy discussion.

University of Texas psychology professor James Pennebaker told Bloomberg News:

It will make people a little bit nervous for a couple of days. The fact is, Google knows everything about us, Amazon knows a huge amount about us. It’s stunning how much all of these big companies know. If one is paranoid, it creeps them out.

While the ethics will continue to be debated because of the lack of consent from those whose feeds were manipulated, we’ll continue to be freaked at our lack of real privacy on the web, and the FTC will investigate the deceptive practice, Facebook doesn’t owe any of us an apology.

I would have counseled them to do exactly what they’ve done: Issue a response that explains the reasoning behind the research and then monitor the criticism, but don’t respond.